Upload from any agent

Publish traces from 10+ supported agents directly in the CLI.

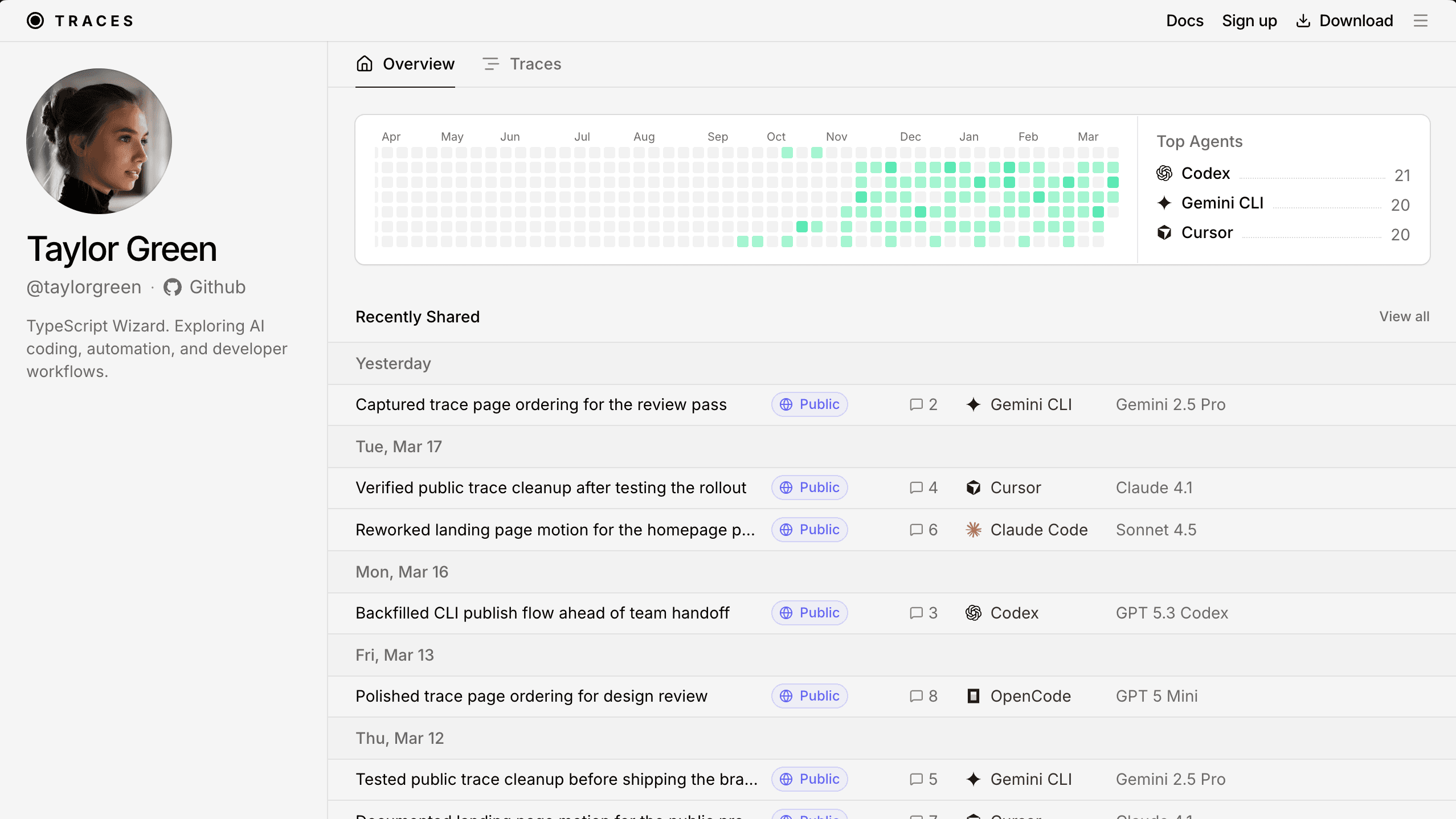

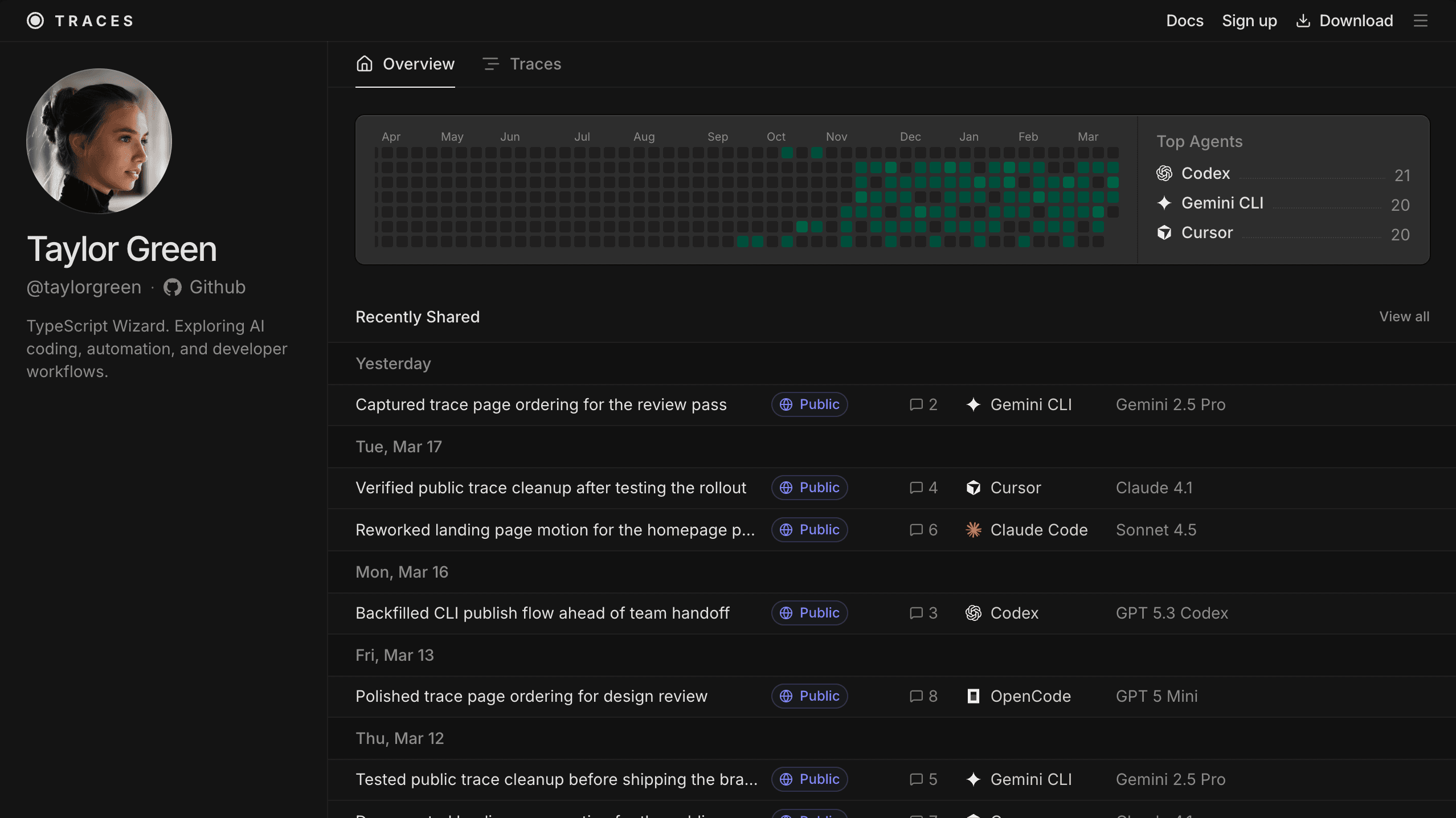

Share and collaborate on your coding agent sessions. Made for teams, and free to get started.

Download the CLI to get started

Works with your favourite agents

Start sharing traces from any agent, in minutes.

Publish traces from 10+ supported agents directly in the CLI.

Copy the link & share your trace with your team or the world.

UserMenu because it uses imperative WAAPI and can re-measure on state changes. I'm reading that implementation next and then I'll verify smoothing techniques from current guidance.Download a full trace or continue working on someone else's.

Download a trace, or continue it in Claude Code. The open agent menu lists OpenCode, Pi, Claude Code, Codex, Cursor, Gemini CLI, Amp, Cline, OpenClaw, GitHub Copilot, Hermes, Droid, with Claude Code selected.

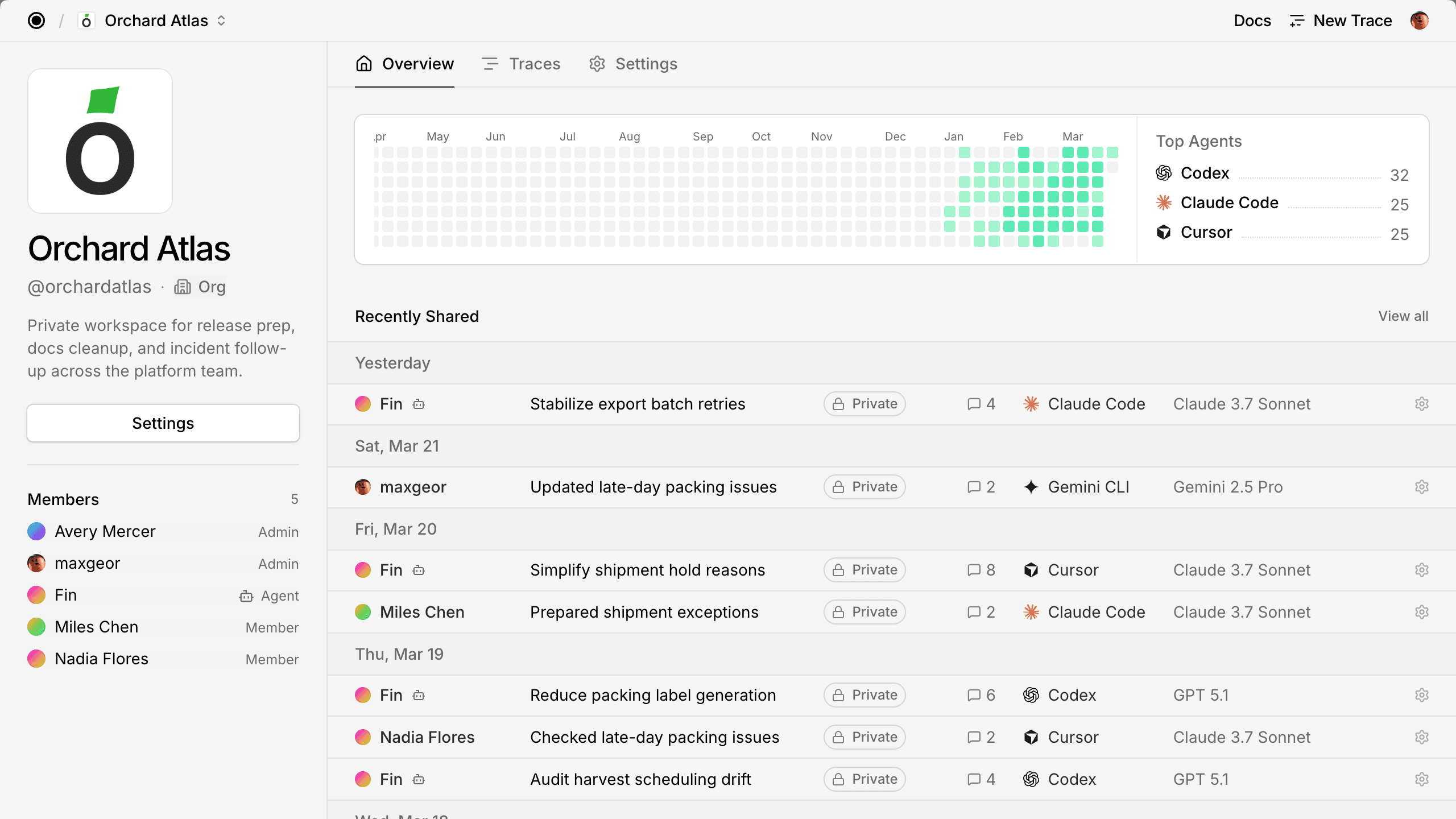

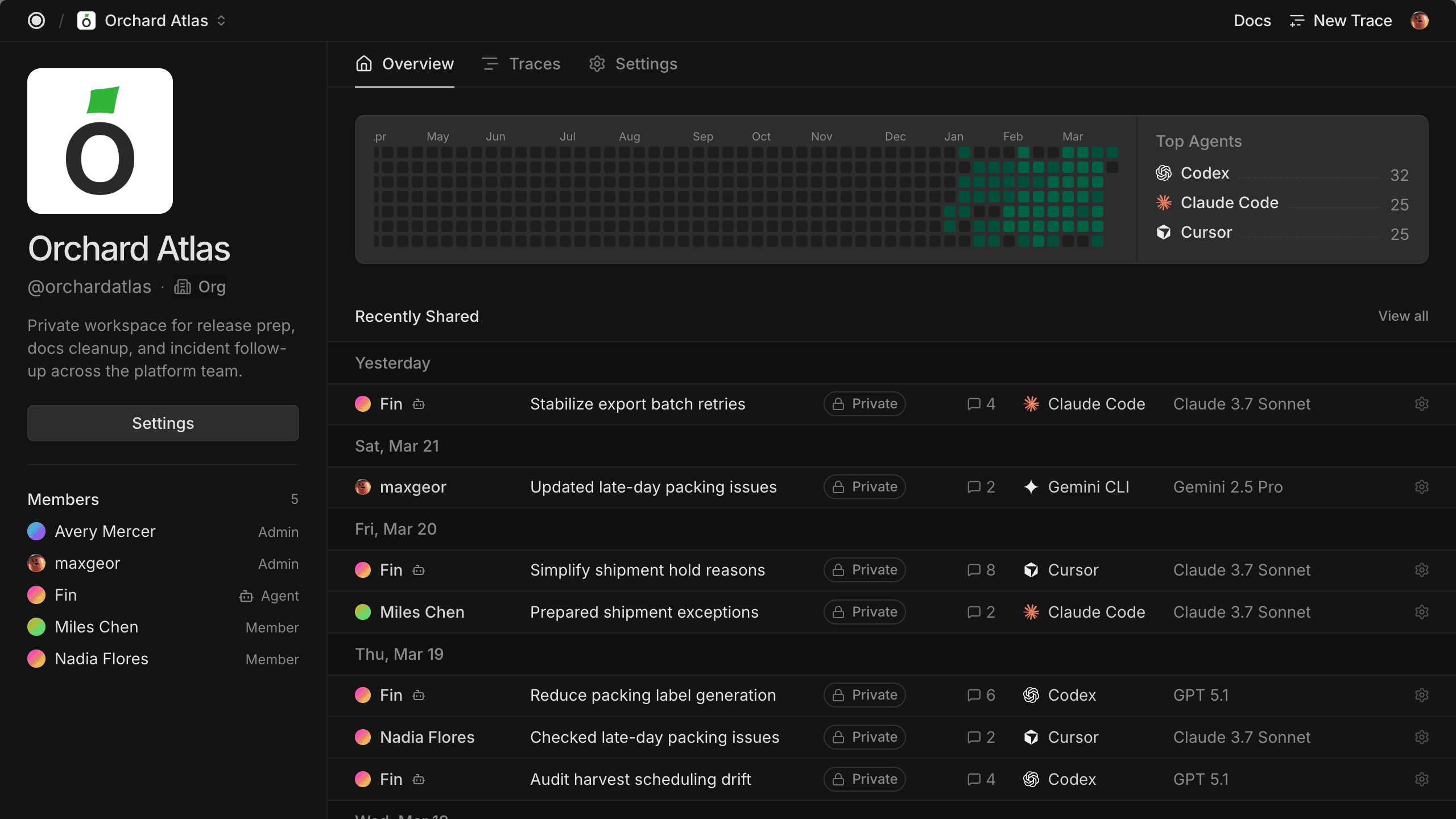

Traces for Teams

Traces gives teams one place to see work in progress, share context on finished work, and see which agents people are using.

Create your Team

“Github increasingly doesn't feel like the best place to understand the work done on a codebase. Agent traces provide a much more human-readable overview. Just started using traces.com. Feels quite nice.”

Millin Gabani, CEO of Worklayer

See original postShare agent conversations without worrying about sensitive data.

Share your traces privately, directly, or publicly, so only the right people see them.

maujim · 102 messages

Diagnose and Enhance Voltage Error Reporting

maujim · 102 messages

Diagnose and Enhance Voltage Error Reporting

maujim · 102 messages

Diagnose and Enhance Voltage Error Reporting

Set team-level policies to control how your team can share traces.

Traces can be published as:

We automatically strip sensitive data like API keys, emails & database credentials from traces on publish.

Shared the rollout trace after scrubbing [REDACTED], [REDACTED], and [REDACTED] from the assistant reply before sending the link to the team.

The published run keeps the reasoning intact while replacing keys, customer emails, and database URLs with clear [REDACTED] markers anyone can spot immediately.

Reviewers still understand what happened, but the sensitive values stay hidden behind [REDACTED] in every shared view.

Start simple & integrate more as you go.

Publish & manage traces directly from your terminal.

Let your agent share traces as you work.

Publish traces via API with your own tools, schedulers & workflows.

Automatically share traces on every commit from your CI/CD pipelines.

Free for individuals & small teams, flexible at scale.

Core

Free

Custom

Get in touch

See what ships, loop in the right agents, and share traces with git hooks & custom skills.

Team analytics preview showing Claude Code, Codex, Cursor, Gemini CLI, and Amp as the top agents, with an average session length of 47 minutes and 82.0 percent AI output.

A GitHub-style pull request timeline shows Maya Chen committing a docs update, a Traces bot comment with pull request trace links, two preview deployments, and a pull request mention.

Traces found for this PR:

Team member list showing Maya Chen as admin, Theo Brooks as member, Alice as the invited agent, Lina Park as member, and Ari Singh as member.

A terminal conversation showing a request to share a trace, the Traces skill that ran, and the private share link confirmation.

Great, I added back the function.

Share this trace

Skill Traces

Ran traces share --trace-id w9g0svsjx839ngt --json

Shared your trace to https://traces.com/s/w9g0svsjx839ngt as private.

Supported agents include Claude Code, Cursor, OpenCode, Codex, Gemini CLI, Pi, Amp, Cline, OpenClaw, GitHub Copilot, Hermes, Droid.

Install the CLI to get started or make an account first.

Download the CLI to get started

or Create an account

Create your accountBrowse traces & learn how people are using agents. Contribute by sharing your own traces.

Browse public tracesThe user expresses concern about spending youth worrying about deathbed regrets and asks if gradient descent is the best way to live a good life. The assistant explains that locally optimizing based on immediate feedback is effective for short-term adjustments but insufficient as a complete life strategy due to changing objectives and complex failure modes.

The user expressed surprise about their bones being wet. The assistant explained that living bones are naturally wet due to their water content, which contributes to their toughness, and reassured the user with humorous and vivid descriptions about the body's wet and living nature.

The trace explores how the task decomposition strategy of recursive language models (RLMs) compares to the implementation and goals of a specific repository, referred to as the "Sovereign Hive" orchestration CLI. The assistant researched RLMs, then examined the repo's orchestration core, verifying that its decomposition approach is currently a stub and differs fundamentally from RLMs' recursive invocation strategy.

The user requested analysis of an ATIF trajectory using the context-lens CLI with a modified taxonomy. The assistant converted the raw ATIF data into the required format, ran context-lens with the specified model, normalized the output labels, and published the cleaned export as a secret gist for viewing. After the user reported a parsing failure in the viewer, the assistant diagnosed a missing field in the analytics data, fixed the export, updated the gist, and verified successful loading in the Context Viewer.

The trace involves generating and running a script to trigger context compaction in an LLM session to inspect what data is sent and verify if uncompacted session data remains accessible to the user. The assistant wrote and executed the script, adjusted compaction thresholds, confirmed compaction behavior, and explained how uncompacted context remains accessible to users in a different form.

The user requested modifications to the /usr/local/bin/nextdns-doctor script to include checks for public DNS resolvers like Cloudflare and Google. The assistant added these checks, improved the output formatting for clarity, and updated the script to only show the 'Common fixes' section when failures occur, ensuring cleaner and more relevant output.

The trace documents converting a LaTeX-generated research paper PDF into a Kindle Paperwhite 7th gen compatible EPUB. The assistant developed a custom build script with advanced heuristics for prose, tables, and TOC, performed multiple QA checks, and delivered the final EPUB file to the user's Downloads folder.

The user requested a summary of how larger projects are managed in the Traces system based on past projects in the code/traces directory. The goal is to create a comprehensive overview to share on social media after posting it to Traces.

The user expresses concern about spending youth worrying about deathbed regrets and asks if gradient descent is the best way to live a good life. The assistant explains that locally optimizing based on immediate feedback is effective for short-term adjustments but insufficient as a complete life strategy due to changing objectives and complex failure modes.

The user expressed surprise about their bones being wet. The assistant explained that living bones are naturally wet due to their water content, which contributes to their toughness, and reassured the user with humorous and vivid descriptions about the body's wet and living nature.

The trace explores how the task decomposition strategy of recursive language models (RLMs) compares to the implementation and goals of a specific repository, referred to as the "Sovereign Hive" orchestration CLI. The assistant researched RLMs, then examined the repo's orchestration core, verifying that its decomposition approach is currently a stub and differs fundamentally from RLMs' recursive invocation strategy.

The user requested analysis of an ATIF trajectory using the context-lens CLI with a modified taxonomy. The assistant converted the raw ATIF data into the required format, ran context-lens with the specified model, normalized the output labels, and published the cleaned export as a secret gist for viewing. After the user reported a parsing failure in the viewer, the assistant diagnosed a missing field in the analytics data, fixed the export, updated the gist, and verified successful loading in the Context Viewer.

The trace involves generating and running a script to trigger context compaction in an LLM session to inspect what data is sent and verify if uncompacted session data remains accessible to the user. The assistant wrote and executed the script, adjusted compaction thresholds, confirmed compaction behavior, and explained how uncompacted context remains accessible to users in a different form.

The user requested modifications to the /usr/local/bin/nextdns-doctor script to include checks for public DNS resolvers like Cloudflare and Google. The assistant added these checks, improved the output formatting for clarity, and updated the script to only show the 'Common fixes' section when failures occur, ensuring cleaner and more relevant output.

The trace documents converting a LaTeX-generated research paper PDF into a Kindle Paperwhite 7th gen compatible EPUB. The assistant developed a custom build script with advanced heuristics for prose, tables, and TOC, performed multiple QA checks, and delivered the final EPUB file to the user's Downloads folder.

The user requested a summary of how larger projects are managed in the Traces system based on past projects in the code/traces directory. The goal is to create a comprehensive overview to share on social media after posting it to Traces.